There is a quiet, tectonic shift happening in the way revenue teams operate, and most leaders are looking in the entirely wrong direction. They are focused on LLM benchmarks and model rankings, judging the future through the "keyhole" of their own limited, traditional business experiences. They see headlines about Anthropic’s soaring enterprise adoption and mistake it for just more AI market hype.

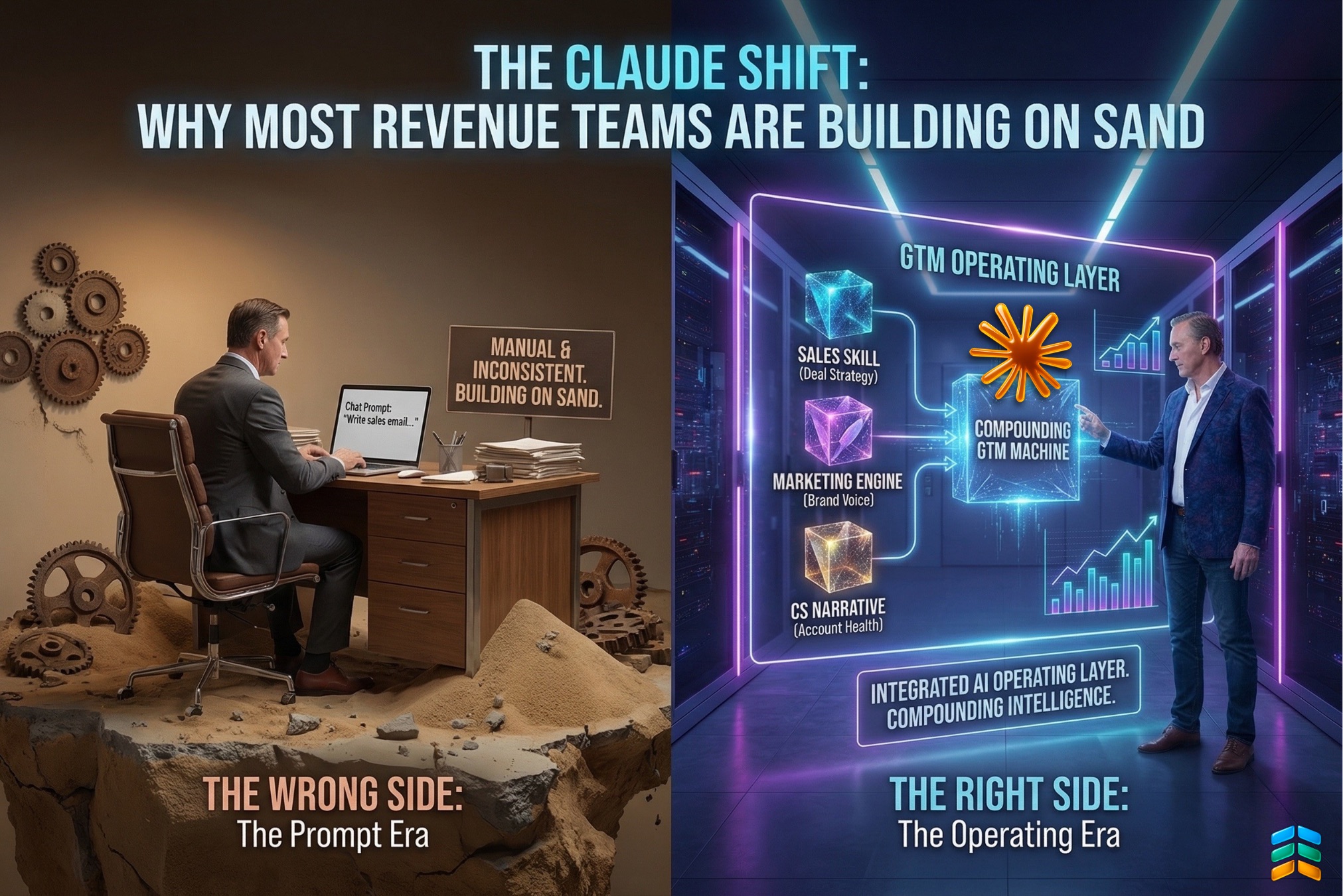

But the real story isn't about which model is "smarter." It is about a fundamental change in operational mechanism. We are witnessing the end of the "Co-pilot" era—which has actually created a new kind of financial drag—and the birth of the AI Operating Layer.

The Myth of Mastering Prompt Engineering

For the last two years, the industry has sold a lie: that the value of AI lies in the "prompt." The new buzz in engineering has been called “prompt engineering.” We’ve been told that if you just find the right sequence of words, the AI will hand you a finished campaign or a perfect sales email.

This approach is a dead end. It’s manual, it’s inconsistent, and it doesn't scale. When your reps start every morning with a blank chat window, they aren't building a business; they are performing an experiment. There is no shared context, no memory, and no compounding intelligence. The 50th prompt is no better than the first.

But, "once bitten, twice shy" leaders have learned and advanced. They are now engineering Outcome-Based Autonomy—building persistent, compounding GTM machines that will finally, actually, impact the P&L.

The Physics of the "Wrong Side": The Supervision Trap

This failed reliance on the "magic prompt" leads directly to the Supervision Trap. We are all guilty of it at some level: adding expensive seat licenses for "assistants" while our team headcount remains stubbornly tied to revenue growth.

When your reps start every morning with a blank chat window, they are engaging in a useless genre of AI—let’s refer to it as Ephemeral AI. Because the session possesses no institutional memory, the AI "forgets" who you are, what you sell, and why you win the moment the window is closed. There is no shared context, and therefore, nothing compounds.

This risk is no longer theoretical; with the release of autonomous 'agent teams' in models like Claude Opus 4.6, the Supervision Trap has evolved into a structural crisis where the human auditor is being engineered entirely out of the loop.

When an AI drafts a response but a manager must spend ten minutes reviewing it for accuracy and tone, you haven’t saved money; you’ve simply shifted labor from "doing" to "supervising". This creates a "Hype Hangover" where the cost of human oversight cancels out the gains of machine output. If you are still trapped in this loop, you aren't scaling—you are just paying a "Supervision Tax" on every decision.

In this "Wrong Side" model, labor remains a depreciating overhead cost. To double your leads, you still (roughly) double your headcount; your margins stay flat because your costs scale alongside your success. This is the "Wrong Side" of the shift: AI as an occasional assistant. It is a tool used in isolation that leaves no footprint on the organization’s collective wisdom.

For a deeper look at why this trap has become a structural crisis, read Jim’s analysis: The Supervision Trap Just Snapped Shut: Why Opus 4.6 Changes the Liability Equation.

The Mechanism of the "Right Side": Persistent Skills

The reason the "Claude Shift" is real has nothing to do with venture capital or revenue numbers. Revenue doesn't prove something works; it only proves it was sold. The true proof is found in the mechanism.

The "Right Side" of the shift is defined by the move toward Claude Skills. A "Skill" is not a prompt. It is a persistent, brand-configured behavioral layer that lives inside your GTM stack . When you move to the "Right Side," you stop asking the AI to "write an email." Instead, you inhabit a persistent environment where the AI already knows your Ideal Customer Profile (ICP), your brand voice, your competitive landmines, and your historical win/loss data .

On the right side of the shift:

This transition from "Chatting" to "Operating inside a System" is how you move an AI from a "Co-pilot" to a "Department Head," creating an Appreciating Software Asset.

Layer 1: The Claude Skill (Persistent Behavior)

A "Skill" is not a prompt; it is a persistent, brand-configured behavioral layer. By using features like Claude Projects, revenue teams build a "Context Vault"—a reasoning system where the AI has the same "memory" of a client relationship as a 10-year veteran employee.

This infrastructure moves beyond simple text generation to create an appreciating software asset, where GPT-5.4 synthesizes massive datasets alongside Fathom and HubSpot signals to reason on behalf of the GTM team.

When a rep enters this environment, they aren't prompting from scratch; they are stepping into a pre-configured cockpit. Because this context is persistent, the intelligence compounds. You stop being a supervisor of tasks and start being a Curator of Logic.

To understand how this shift from task-based efficiency to outcome-based autonomy changes the math of your business, read: The Economic Architecture of Autonomy: Why Your Next "Hire" Should Be an AI Agent.

Layer 2: MCP (The Autonomous Operator)

What makes Skills actually powerful is the Model Context Protocol (MCP). Without MCP, Skills are smart templates. With MCP, they are autonomous operators inside your revenue infrastructure, capable of bridging the gap between reasoning and action.

Layer 3: Reasoning Density as Infrastructure

The true proof of this shift isn't found in top-line revenue—it’s found in Reasoning Density. This is the critical threshold where a company moves beyond simple, automated tasks and into systemic logic.

When intelligence is truly embedded inside your company’s operating system, you finally decouple your headcount from your revenue growth . This is the moment you cross the Intelligence Poverty Line—moving from a business that scales through expensive human labor to one that scales through an appreciating asset of autonomous logic.

Sidebar: Understanding the Intelligence Poverty Line

The Intelligence Poverty Line is the invisible threshold where a company’s unit economics either thrive through autonomous logic or collapse under the weight of human overhead.

For a deeper dive into the economics of this threshold, read Jim’s full analysis: The Economic Architecture of Autonomy: Why Your Next "Hire" Should Be an AI Agent

From Theory to Factory: The AI GTM Accelerator

The gap between these two sides is where most companies fail. They recognize the "Right Side" is better, but they don't have the blueprint to build the infrastructure. We built the AI GTM Accelerator—a 90-day, 12-session intensive—specifically to move teams from "Prompters" to "Operators".

Our core design principle is that we don’t teach, we implement. Every session follows a rigorous four-part structure: Frame, Demo, Practice, and Debrief .

Phase 0: The "Mirror of Truth"

Before we build, we must assess. The accelerator begins in Week 1 with a GTM Operations Assessment. This acts as a "Mirror of Truth" for executives, mapping the current state of systems, data quality, and AI readiness.

Most leaders blame their AI for poor results, but this diagnostic often reveals the real culprit: Data Siloes. If your CRM data is stale or your systems aren't connected, your AI ROI is dead on arrival.

This is What We Are Building: The GTM Skills Inventory

The Accelerator is a Skills Factory. Over 12 weeks, we turn core revenue workflows into persistent AI capabilities.

The Compounding Revenue Machine

The shift from Prompting to Operating is the difference between a team that is constantly starting over and a team that is building a moat. When your AI environment knows your customers as well as your best veteran, you have stopped experimenting . You have started scaling.

The most powerful dividend is Trust as a Financial Asset. The faster you can trust your agents to operate within "Glass Box" governance, the faster you can scale without hiring.

Start Your Journey: The Phase 0 Diagnostic

The Claude shift has started. It isn't about buying a seat; it’s about building a system.

We invite you to step onto the "Right Side" of the shift starting with our Phase 0: Kickoff & GTM Operations Inventory. This is a low-risk, high-impact diagnostic session designed to map your current state and identify the critical gaps in your AI readiness.

Don't commit to a heavy implementation before you know if your foundation can support it. Let us provide the "Mirror of Truth" for your revenue engine.

Click here to schedule your Phase 0 Assessment and see the "Right Side" of the shift for yourself.